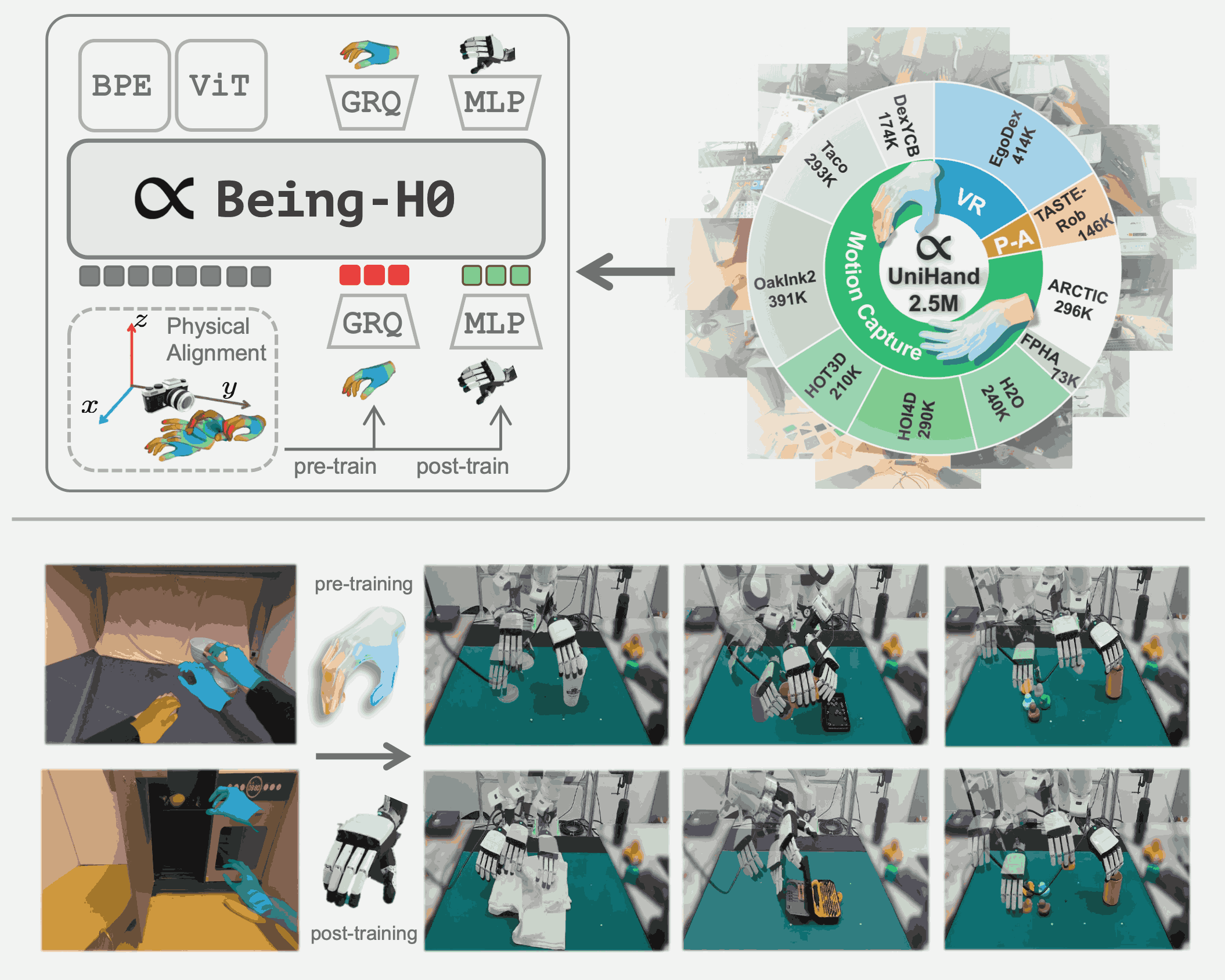

Being-H0: Vision-Language-Action Pretraining from Large-Scale Human Videos

All Papers

Back to Home* equal contribution † corresponding author

Being-H Series

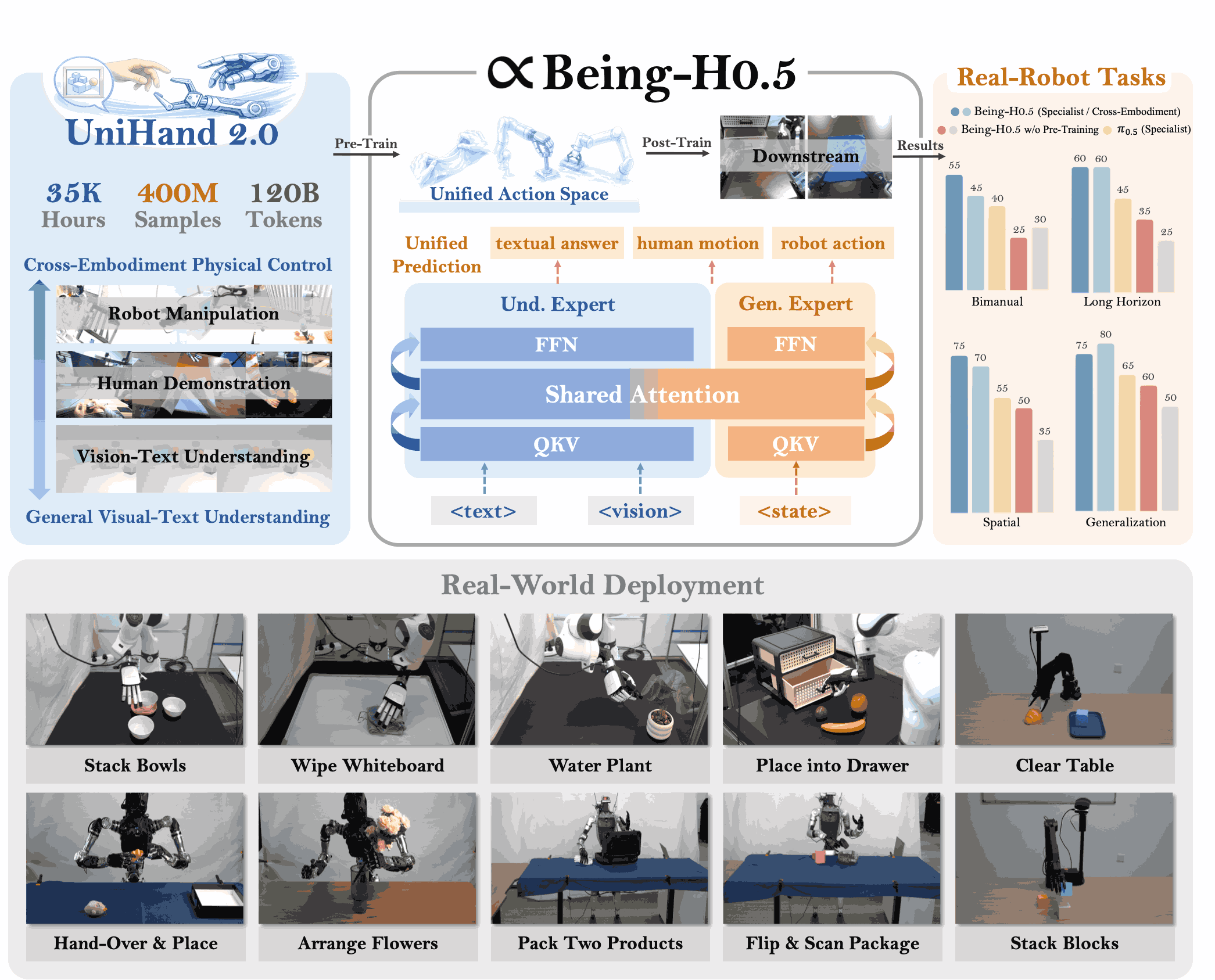

Being-H0.5: Scaling Human-Centric Robot Learning for Cross-Embodiment Generalization

Learning from Videos — VLA

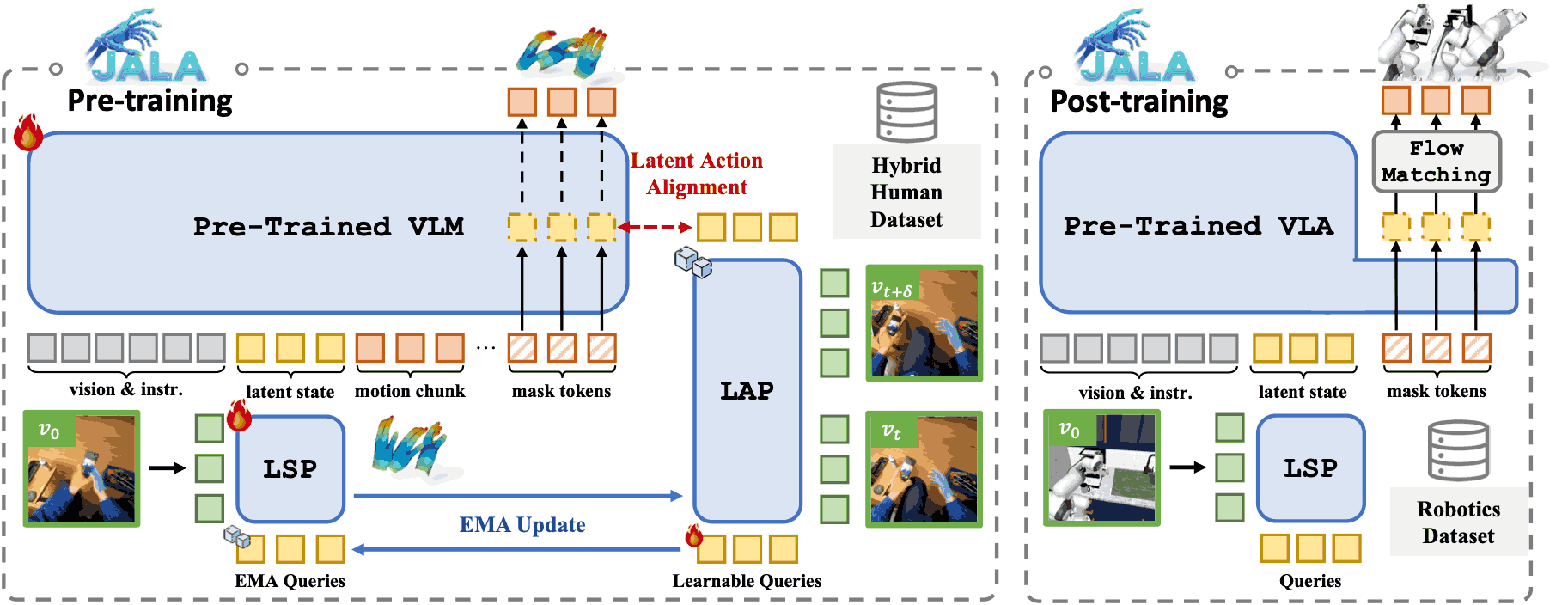

Joint-Aligned Latent Action: Towards Scalable VLA Pretraining in the Wild

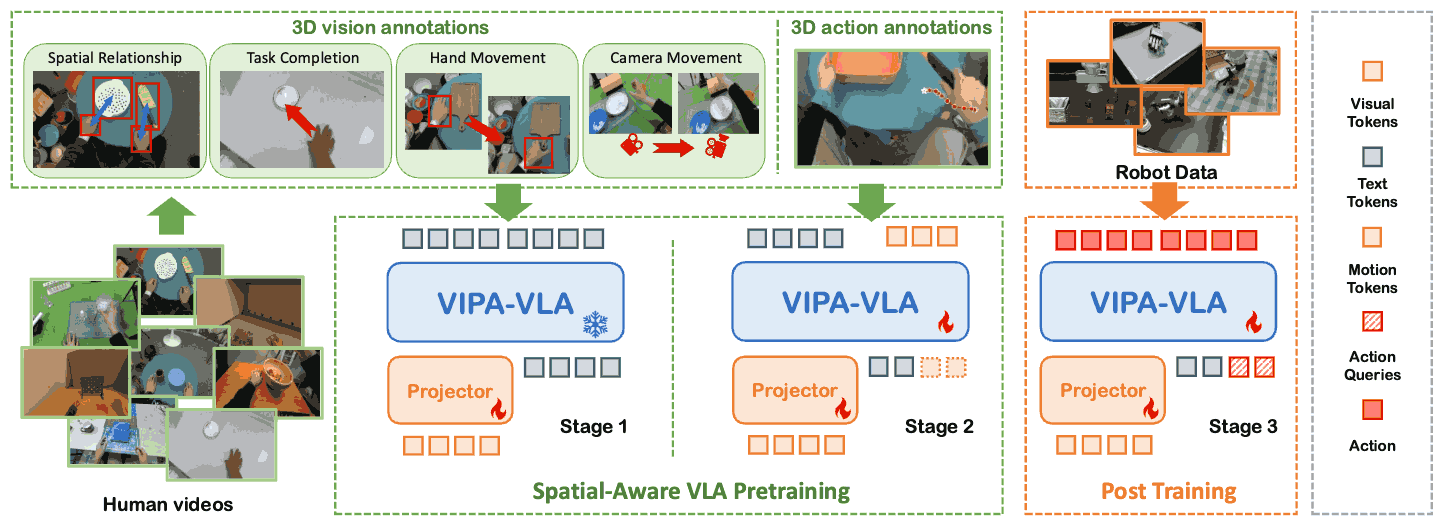

Spatial-Aware VLA Pretraining through Visual-Physical Alignment from Human Videos

Rethinking Visual-Language-Action Model Scaling: Alignment, Mixture, and Regularization

Conservative Offline Robot Policy Learning via Posterior-Transition Reweighting

DiG-Flow: Discrepancy-Guided Flow Matching for Robust VLA Models

Learning from Videos — Visual Policy

Learning Video-Conditioned Policy on Unlabelled Data with Joint Embedding Predictive Transformer

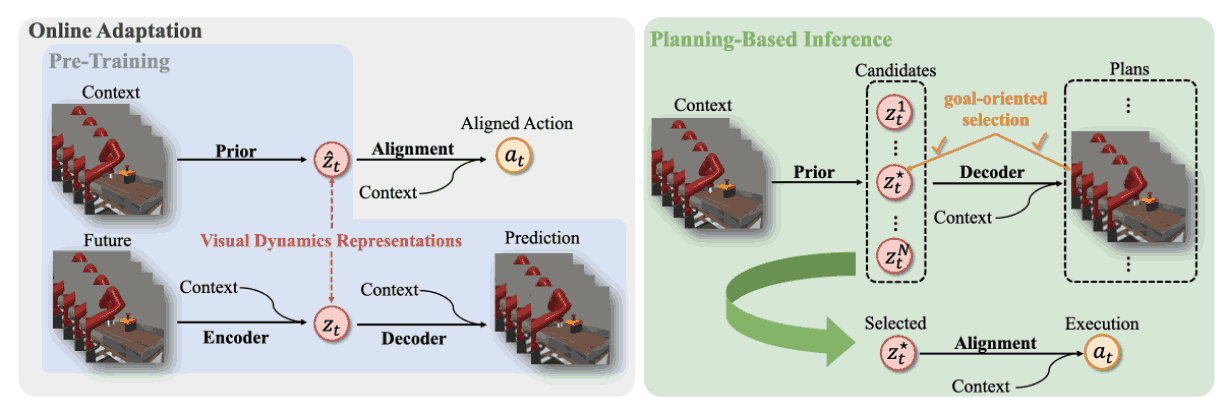

Pre-trained Visual Dynamics Representations for Efficient Policy Learning

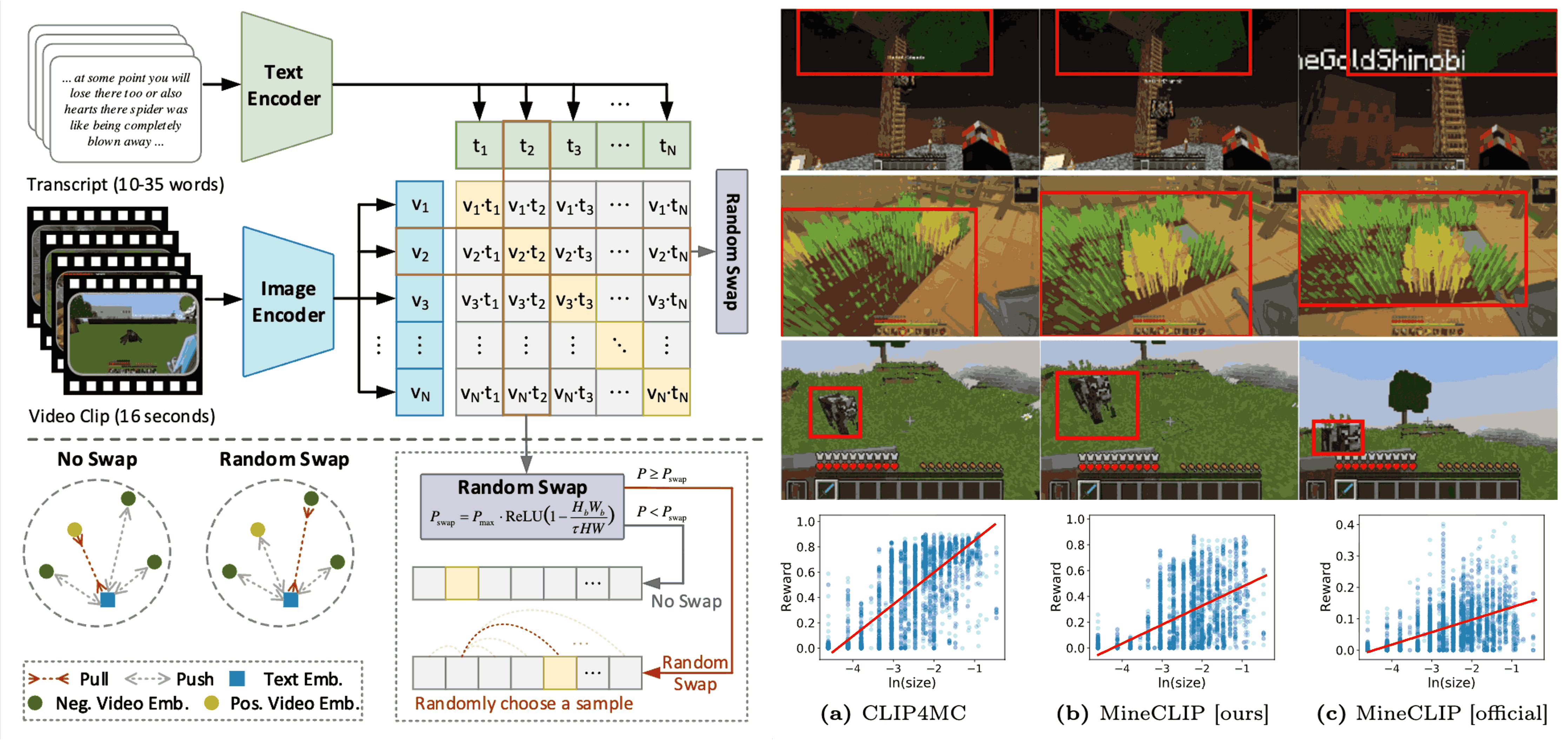

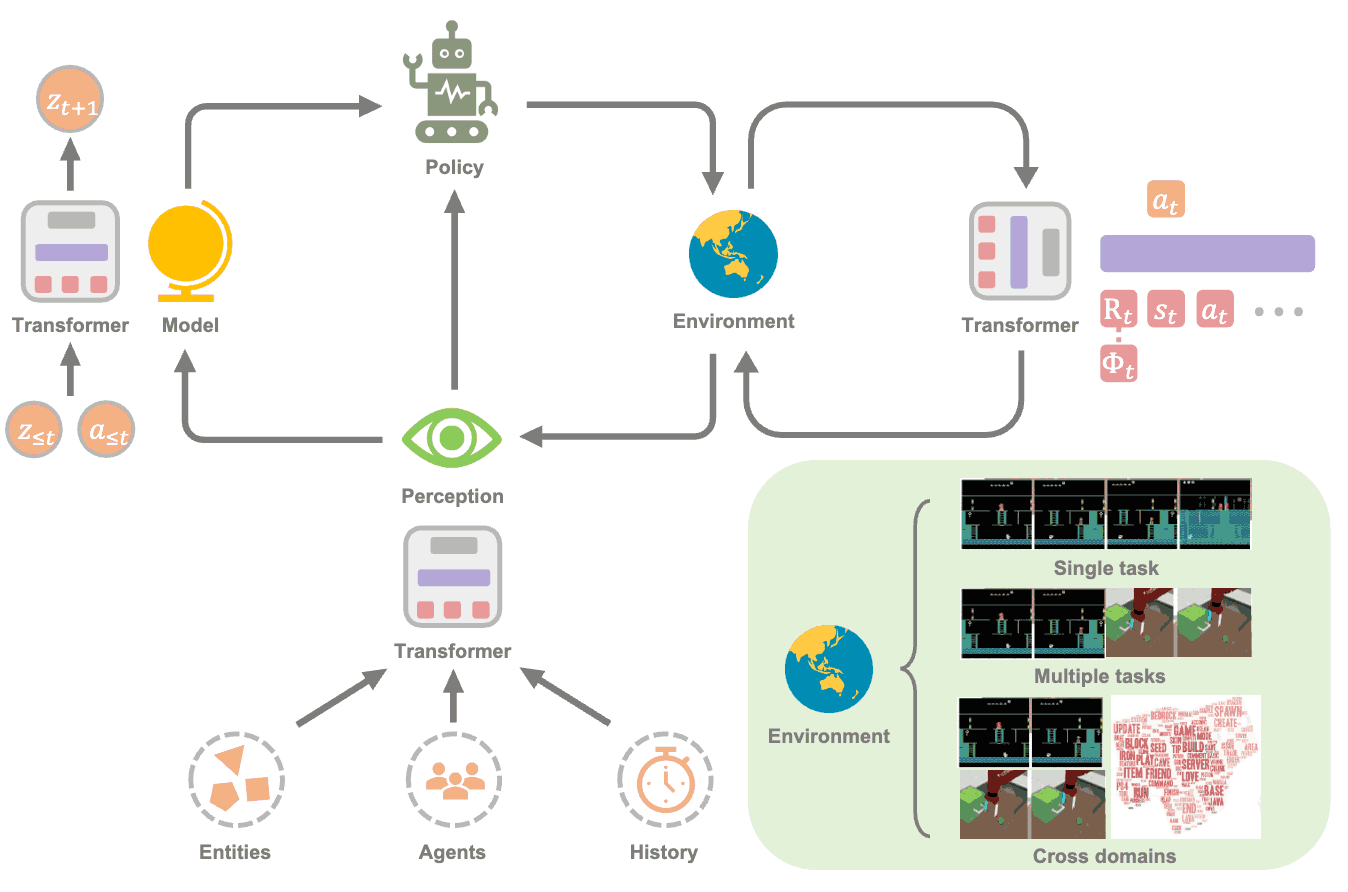

Reinforcement Learning Friendly Vision-Language Model for Minecraft

VLM

OpenMMEgo: Enhancing Egocentric Understanding for LMMs with Open Weights and Data

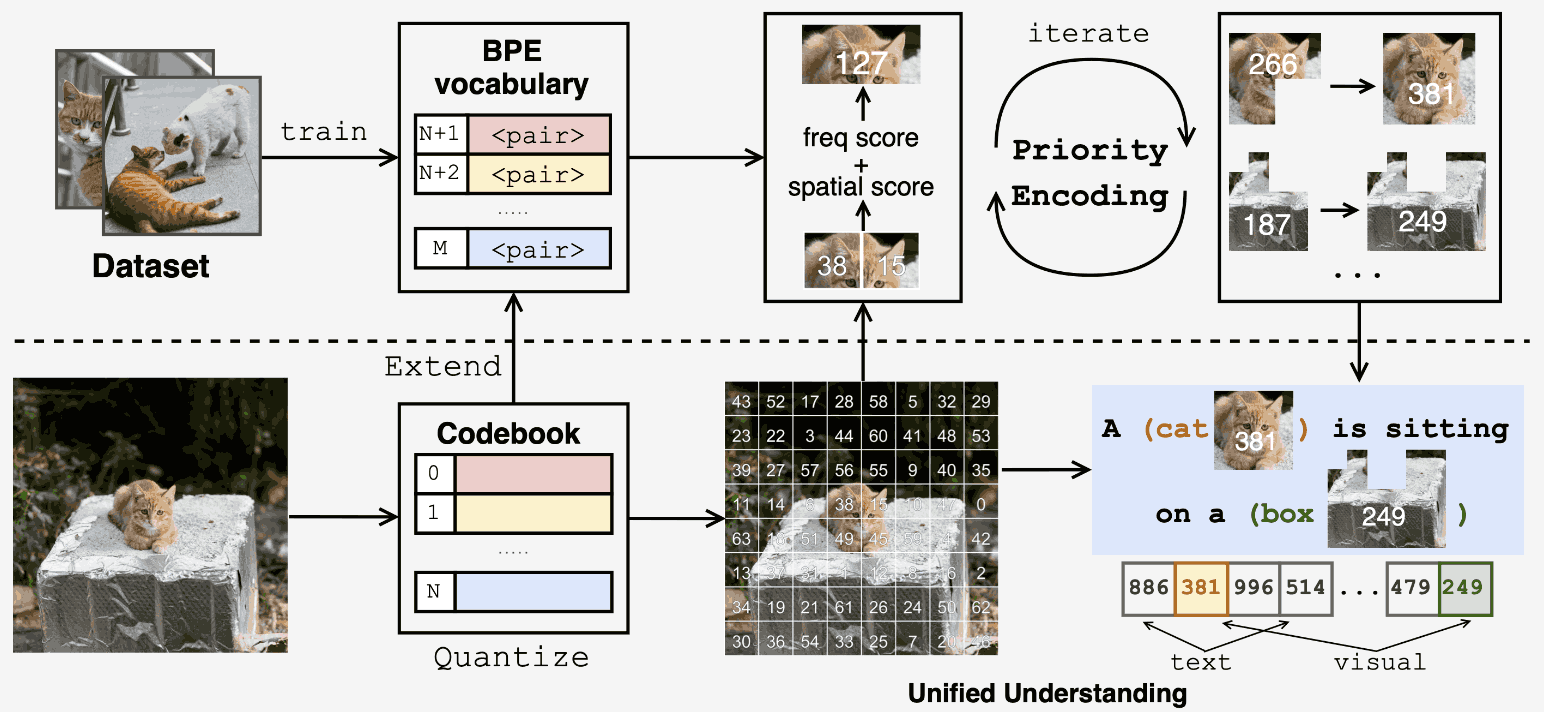

Unified Multimodal Understanding via Byte-Pair Visual Encoding

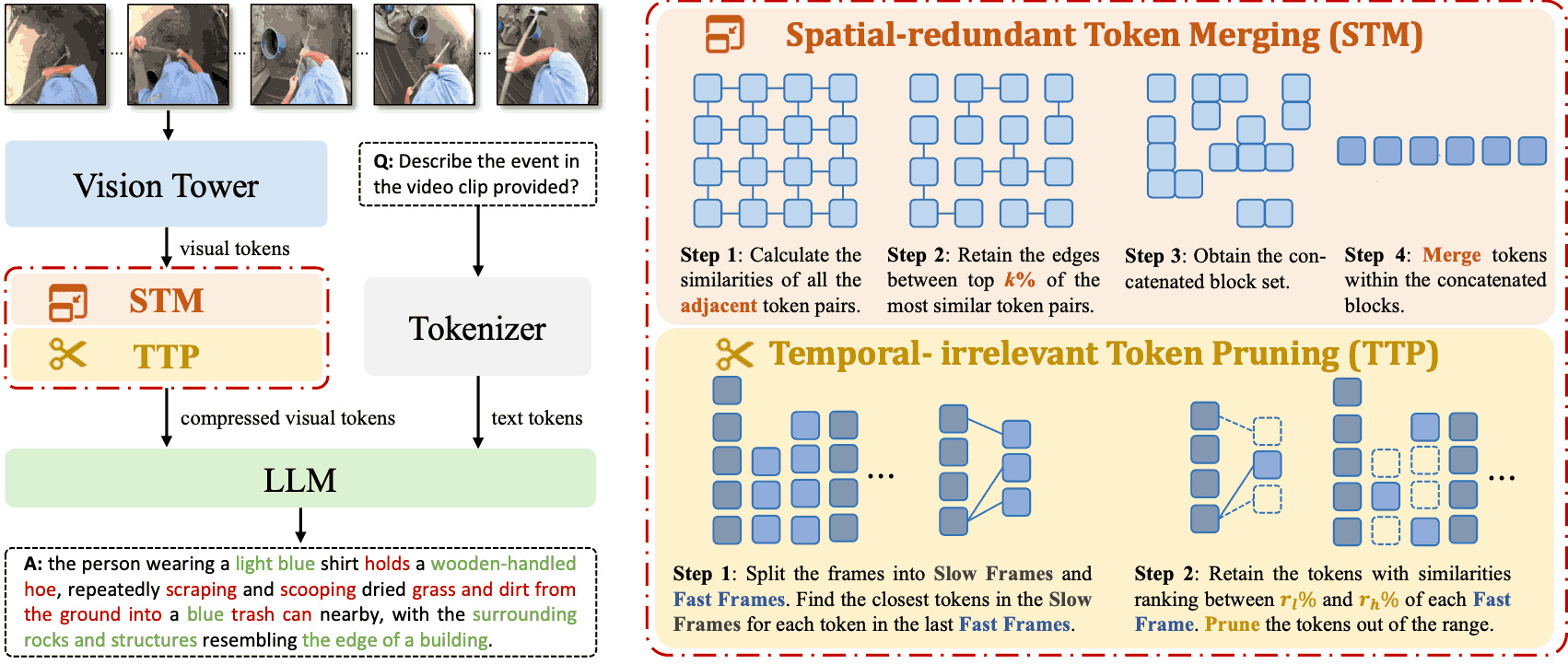

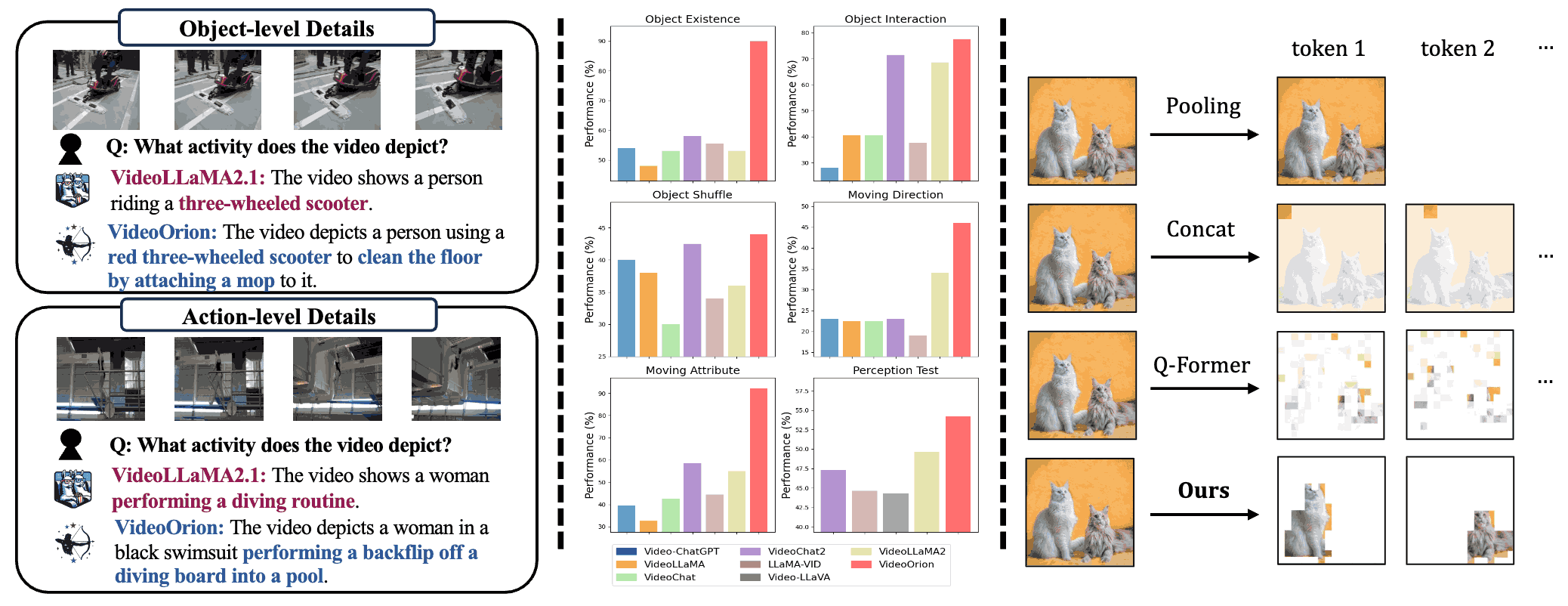

VideoOrion: Tokenizing Object Dynamics in Videos

Other Pulications & Preprints

A Survey on Transformers in Reinforcement Learning

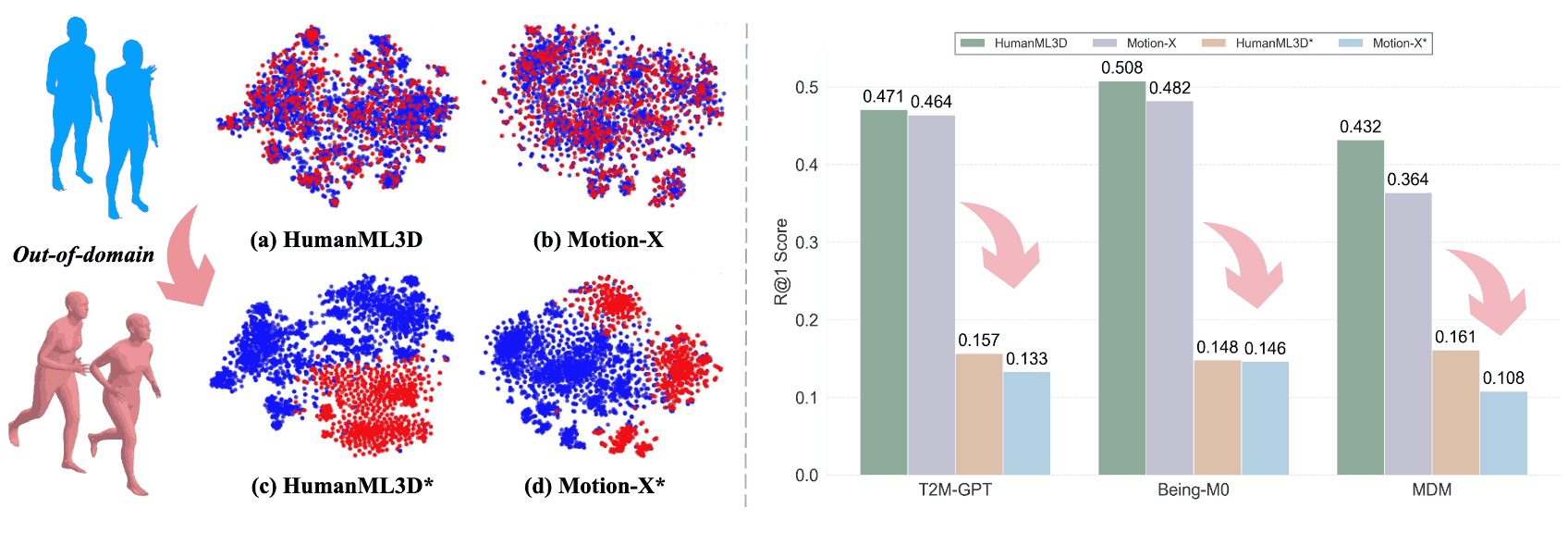

OpenT2M: No-frill Motion Generation with Open-source, Large-scale, High-quality Data

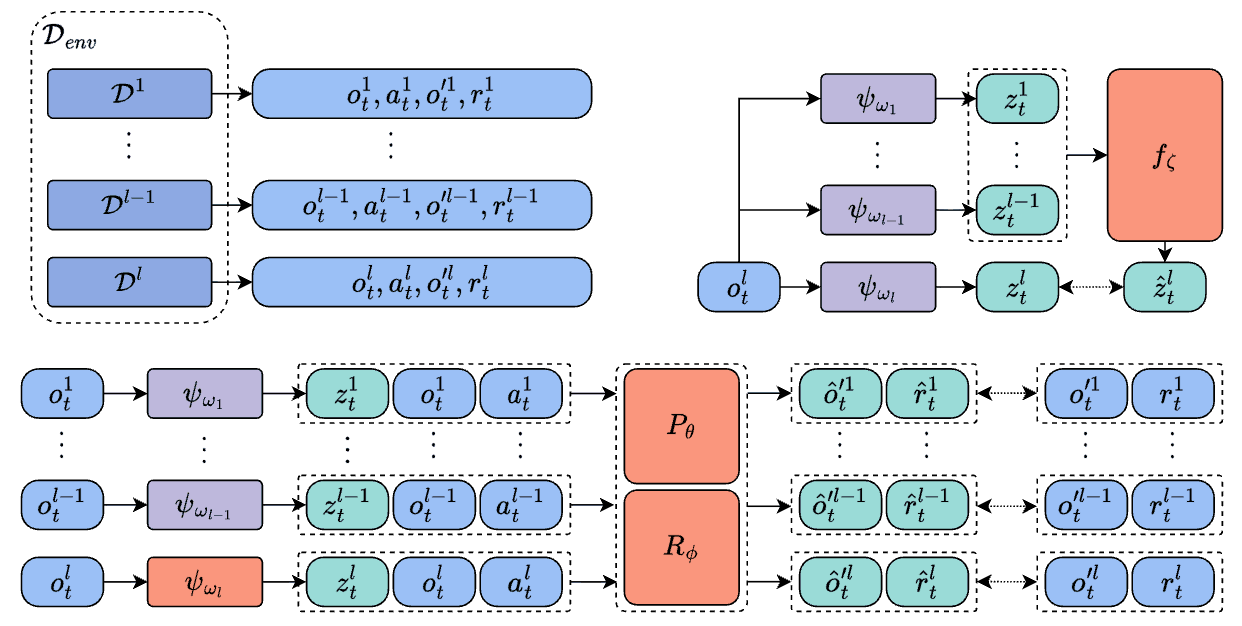

Model-Based Decentralized Policy Optimization